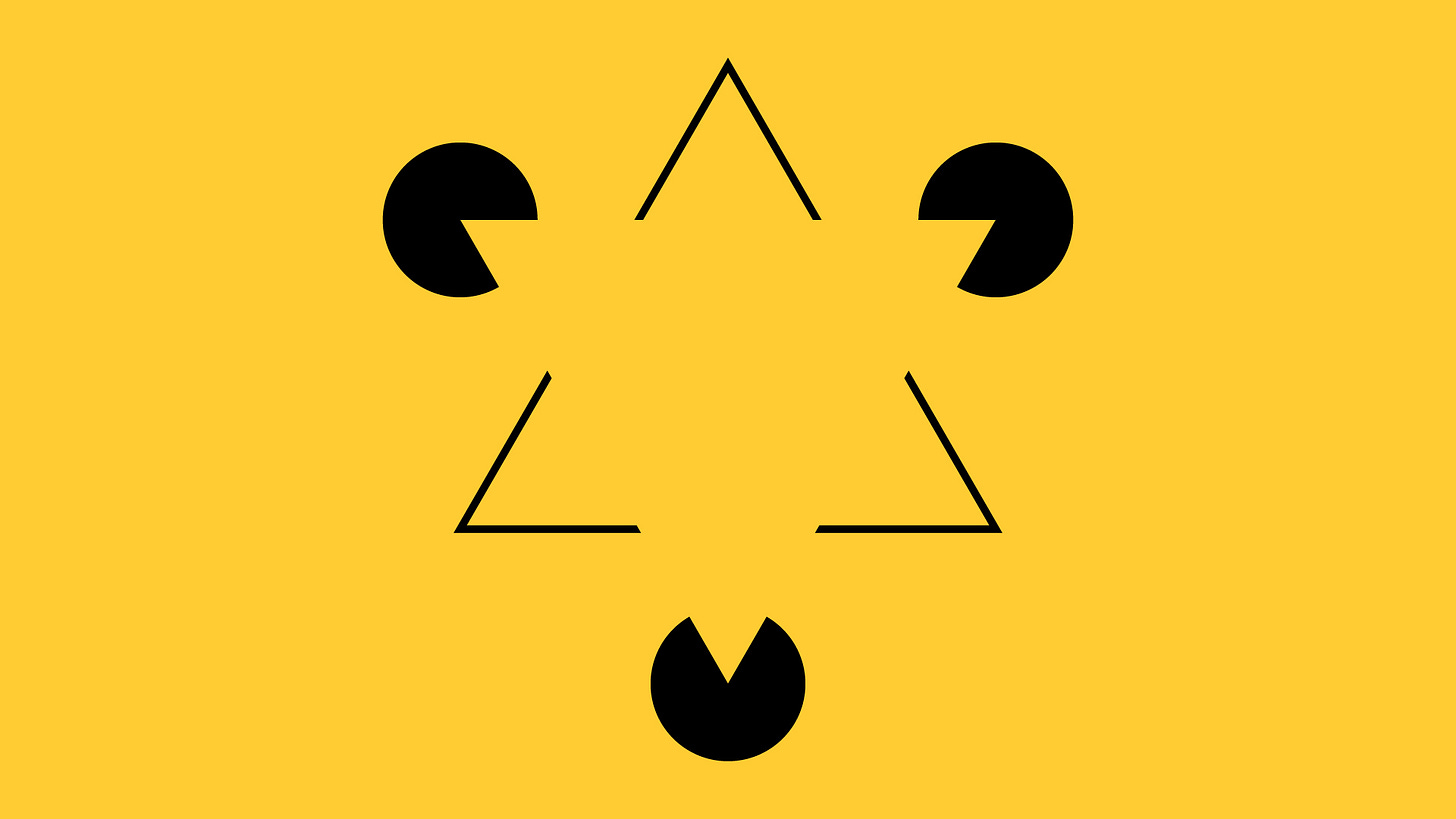

What's missing matters more than what's shown

Anthropic has written a governance document for what is becoming the epistemic infrastructure of human civilization. It is, in structural terms, the same document we didn't write for social media.

We know how the social media story ends because we are living in its final act as governments discover belatedly the addictive effects of platforms like TikTok – incidentally regulated to perform educative functions in China, while allowed to be offered as digital opium to the West – while private interests capture vast audiences by acquiring platforms. The final act is also one of suppression of protective measures, e.g. through the removal of end-to-end encryption on Instagram’s messaging tool.

What started as an instrument of empowerment of people and freedom of expression quickly evolved into a quagmire of haphazard moderation and strenuous defense of the untenable idea that platforms are neutral places with no influence nor responsibility over content generated by their users. Of course, neutrality takes a hit when one examines the rules of moderation and the choice of drivers of reach in the algorithms of these tools, but that’s probably too sophisticated for elected officials to examine, especially when their campaigns are funded by the owners of those tools.

The platforms were built on a founding assumption so deeply held it was never stated: optimize individual interactions well enough, and collective benefit follows automatically. Make the experience engaging, make connections easier, give individuals more voice – and the commons will take care of itself. No intentional governance required. The commons was an emergent property of individual utility, not a shared asset requiring management with intent.

The evidence is now complete. Algorithmic radicalization. Epistemic fragmentation. Democratic processes held hostage to platform architecture designed for engagement through outrage, not deliberation with respect. And, in the most recent chapter, infrastructure that shapes what hundreds of millions of people can say and hear, governed by the private interests of a handful of individuals who acquired that power without ever being granted it, and who are now using it in ways their users did not choose and cannot contest.

This is not a story about bad actors. Bad actors are a symptom. It is a story about a governance assumption that was wrong from the beginning – and that we are now repeating with advanced machine learning solutions and AI, at a deeper layer of cognitive infrastructure, with the same confidence and the same silence where the governance question should be.

Anthropic has published what it calls Claude's new Constitution: a twenty-thousand word document describing the values, character, and ethical framework of a system already operating at significant scale, and growing. It is, in many respects, a serious document. The virtue ethics foundation is defensible. The acknowledgment of uncertainty about AI moral patienthood is unusual and honest. The care invested in edge cases is visible throughout. To better understand the spirit of the document it is worth listening to an interview given a month ago by Amanda Askell, Anthropic’s resident philosopher and ethicist – the official title appears to be Head of Personality Alignment –, where she discusses various important aspects of the thinking and approach at Anthropic. In particular she expresses the idea that “rules are brittle”, because models generalize from them in ways that can undermine the underlying intent – implicitly this speaks for minimization of rules and maximization of judgement and reasoning features to handle edge cases before human intervention, which we know will be costly and therefore as limited and challenged as moderation has been on social media. Askell also speaks of the “well liked traveler” when she says that the model should have values that cause it to be liked across cultures, like a traveler with good character. However one might wonder whether likability should ever be a legitimacy mechanism… In the heyday of the dark years of European colonialism many colonial administrators could be personally well-liked, which did not change anything in terms of consequences of their action on local populations.

What is absent, however, is more significant than what is present. The document is almost entirely oriented toward the individual interaction layer. How should Claude balance helpfulness against honesty in a single conversation? How should it navigate tension between operator instructions and user interests? How should it handle a user in distress? These are real questions, carefully addressed. But the epistemic commons – the shared infrastructure for collective reasoning, democratic deliberation, and resistance to manipulation at civilization scale – appears nowhere as an explicit governance object. It is, again, assumed to emerge from the accumulation of well-optimized individual interactions.

The principal hierarchy the document establishes runs as follows: Anthropic, then operators, then users. The people whose collective epistemic environment is being shaped by this system at scale – societies, democracies, communities with no relationship to Anthropic or its operators – are not in the hierarchy at all. They are the ungoverned commons, assumed to benefit automatically from interactions they are not party to. The choice to call this document a Constitution is not neutral. Constitutional language imports democratic legitimacy – the authority of a founding document that, however imperfectly, derives from some form of collective consent.

This document derives its authority from Anthropic's good intentions. Those intentions may be genuine. Good intentions are not a governance mechanism. Social media platforms were also built by people who genuinely believed they were making the world more connected. The trajectory is legible. Social media captured attention and reshaped what people could see. This operates at the layer of reasoning – what questions feel askable, what conclusions feel reachable, what forms of argument feel legitimate. The infrastructure is deeper. The lock-in will be faster.

The moment when the values embedded in these systems become the values of the people who own them rather than the values of the societies they operate in is not a future risk. It is the social media story, already told, already proven. Governing AI values as epistemic infrastructure – as a commons requiring intentional design rather than an emergent property of optimized interactions – is a solvable design problem. The domains where epistemic hierarchies already carry legitimacy requirements, where affected stakeholders have voice in rule-setting, where interpretations can be contested through defined processes, already exist. Education. Healthcare. Legal services. Financial advice.

The design knowledge is available. The question is whether we apply it before the infrastructure is locked, or after.

We already know what after looks like.